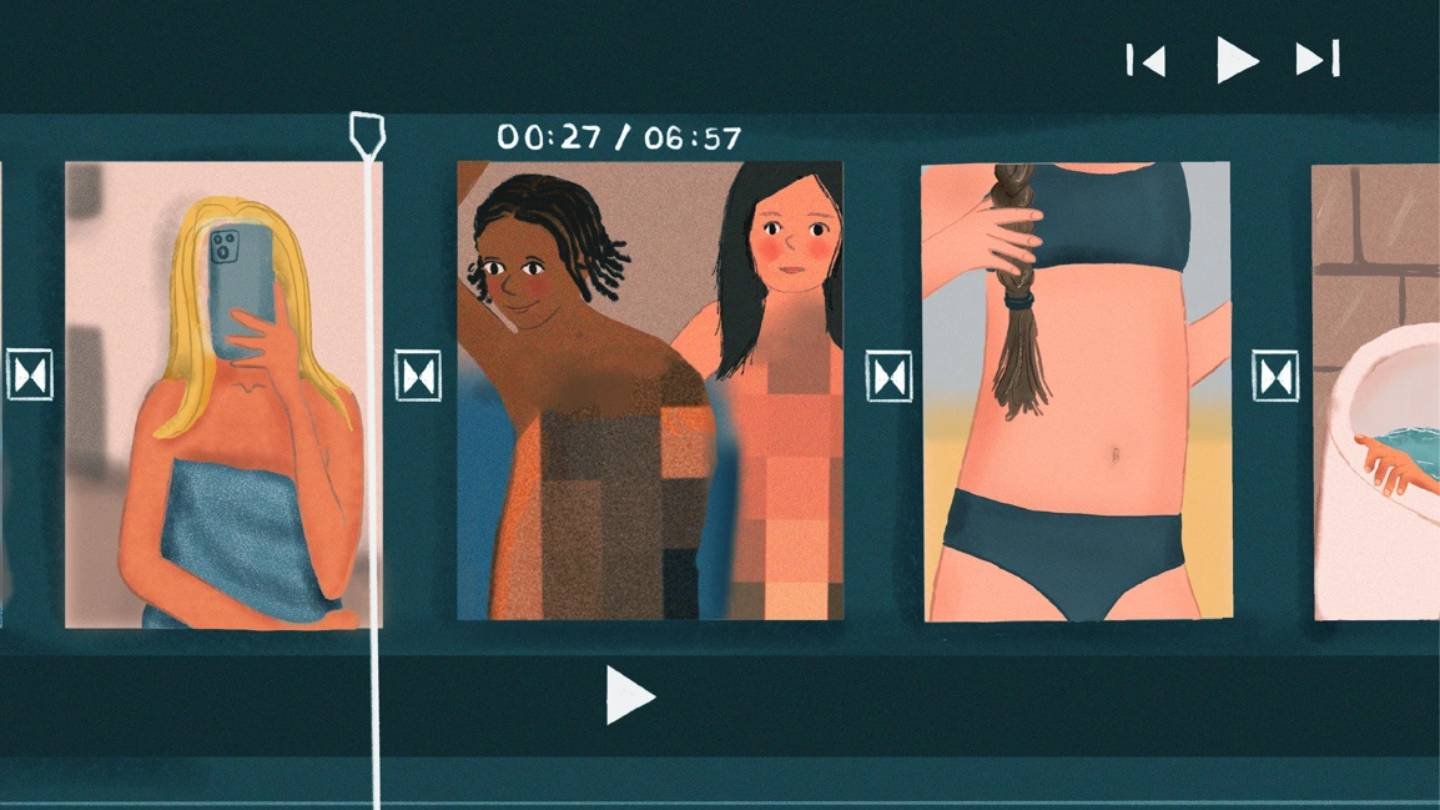

A troubling trend is emerging in the digital space — the rise of sexualised AI-generated videos and images. Experts warn that this misuse of generative AI technology poses serious risks to privacy, safety, and digital trust.

What are sexualised AI clips?

These are artificially created videos or images in which AI tools manipulate faces or bodies to produce explicit or suggestive content, often without the consent of the individuals involved. Known commonly as deepfake pornography, such clips are increasingly being shared across social media platforms and adult websites.

Why it’s a problem

- Consent & privacy: Many victims are women whose likenesses are used without permission, leaving them vulnerable to harassment and reputational harm.

- Accessibility: With AI tools becoming easier to use, creating such content no longer requires advanced technical skills — raising the risk of wider abuse.

- Law & enforcement gaps: While some countries are introducing legislation against non-consensual deepfake content, enforcement remains limited and inconsistent.

The wider implications

Cybersecurity analysts point out that these clips not only harm individuals but also erode trust in digital media. The same technology that can generate fake explicit content could also be weaponised for misinformation, blackmail, or political manipulation.

Efforts to curb misuse

- Tech response: Platforms like Meta, Google, and X (formerly Twitter) have introduced policies banning non-consensual sexual deepfakes, but detection remains a challenge.

- Legal push: Countries including the UK, US, and India are exploring or strengthening laws to criminalise the creation and distribution of such material.

- Awareness & safeguards: Experts stress the need for stronger awareness campaigns, along with watermarking and detection technologies to flag manipulated content.

A call for responsibility

As AI adoption accelerates, experts urge companies and regulators to balance innovation with safeguards. Without stronger protections, victims of sexualised AI clips will continue to face exploitation while digital trust weakens further.

Image Source: Google | Image Credit: Respective Owner